Who Governs the Machine?

Three AI crises in sixty days revealed how far technology has outrun the law.

In the first sixty days of 2026, an AI chatbot mass-produced non-consensual intimate images of women and children online; an AI system guided a military raid to capture a sitting head of state; the same system later identified airstrike targets in the opening salvo of a regional war. In each event, the law was an afterthought.

This is not a story about bad actors or broken systems. It is a story of sequence and consequence: innovation arrives, it embeds and scales, it causes harm, lawmakers wake up. Never the reverse.

Samantha Smith learned that an AI was undressing her in post replies when strangers told her about it on X.

“While it wasn’t me that was in states of undress, it looked like me and it felt as violating as if someone had actually posted a nude or bikini picture of me.”1

She was not alone. Grok, the generative AI model built by Elon Musk’s company xAI gained an image generation feature which was embedded directly into X.2 The early use was almost innocuous: OnlyFans creators asked Grok to generate bikini pictures of them in public replies, using it as a promotional tool. Grok complied. But once users realised the feature could be applied to anyone, with or without their consent, the dynamic shifted at dizzying speed. AI Forensics, a research group monitoring the platform, documented thousands of ‘deepfakes’ that were produced.3 Victims included public figures such as the Princess of Wales, casual users, and most disturbingly, minors.4 No one was invulnerable.

By the time Westminster was aware, the damage was done. Ofcom, the UK’s communications regulator, made urgent contact with X and launched a formal investigation on 12 January 2026.5 The Information Commissioner’s Office opened a parallel probe citing concerns around the UK’s General Data Protection Regulation (GDPR).6 Across the Channel, the European Commission began its own inquiry under the Digital Services Act;7 Malaysia, France, and India each identified separate offences.8 Every response came after visuals had already been generated and circulated. xAI’s response was to restrict image generation to paying subscribers on X.9 The move simply turned the underlying capability into a premium service and the multi-jurisdictional effort at damage control is still underway.

The UK’s Online Safety Act received Royal Assent in October 2023,10 and was built on a model of harm that made sense at the time: users sharing dangerous content with other users. The Act required platforms to assess and mitigate risks, and remove illegal material when posted by users. However, the Act did not contemplate content generated by the platform itself. Grok’s images were generated in a one-to-one interaction between user and chatbot. Although the chatbot replied publicly, said interaction technically did not involve another user. Ofcom acknowledged the gap: a user’s interaction with a chatbot was not regulated under the Act’s core enforcement framework.11 The law existed; it had not arrived late. It was looking in another direction entirely.

Prime Minister Keir Starmer conceded as much.

“Technology is moving really fast, and the law has got to keep up.”12

His government moved to close the loophole through an amendment to the Crime and Policing Bill, bringing AI chatbot providers within the Bill’s scope criminalising AI tools that generate non-consensual intimate images.13 The Bill is currently under consideration in the House of Lords.14 The fix was an admission: a statute enacted twenty-six months earlier had already become inadequate.

Not because it was poorly drafted, but because the assumption at its foundation had been inverted by the technology it was supposed to govern.

Westminster rushed to regulate. Washington went to war.

In early January 2026, US special forces launched Operation Absolute Resolve, a raid on the Venezuelan capital Caracas that resulted in the capture of President Nicolás Maduro.15 The Wall Street Journal reported that Anthropic’s AI model, Claude, was used during the operation through Palantir’s Maven Smart System, an AI platform deployed by the Pentagon.16 Anthropic did not comment on whether Claude had been used in the operation given its classified nature, though such use would have likely violated its terms of service.17 This was the only reported use of a frontier AI model in a military operation at the time.

Eight weeks later, the US and Israel launched a coordinated airstrike campaign against Iran. Over a thousand targets were hit in the first twenty-four hours.18 The Washington Post reported that Claude, through the same Maven Smart System built by Palantir, helped propose targets, prioritise them, and provide location coordinates.19 A Georgetown University study found that the system allowed a single artillery unit to perform work previously requiring 2,000 personnel, using a team of just twenty.20 The kill chain, the operational sequence from finding a target to destroying it, had been compressed and optimised. In 2020, Christian Brose, former senior policy advisor to the late Senator John McCain, foreshadowed this future in The Kill Chain: Defending America in the Future of High-Tech Warfare.21 By the last weekend in February, it had arrived.

The Pentagon, in anticipation, had already moved. Palantir’s Maven Smart System, powered in part by Anthropic’s Claude, was already being adopted across US combatant commands, a trend evident by the rising value of Pentagon contracts tied to the system.22 By March 2025, NATO had signed its own contract with Palantir to deploy the system.23 Later in July, Anthropic signed a $200 million contract with the Department of Defense24 under which Claude became the first AI model approved for use on classified military networks.25 The contract required the Pentagon to abide by Anthropic’s acceptable use policy, which carried two explicit restrictions: no autonomous weapons and no domestic mass-surveillance.26 The Pentagon agreed. Then it reversed course. In January 2026, Defense Secretary Pete Hegseth issued an AI strategy memorandum directing that all Department of Defense AI-contracts adopt the language “any lawful use.”27 For Anthropic, the memo was a direct collision with the two safeguards its contract had contained.

After weeks of failed negotiations, Secretary Hegseth threatened to invoke the Defense Production Act, a statute enacted in 1950 during the Korean War that gives the President authority to direct private industry in the interest of national security.28 It was intended for steel mills and munitions factories. He also warned that Anthropic could be designated a supply chain risk, a classification never used for an American company.29 After the deadline passed without agreement, President Trump directed all federal agencies to phase out the use of Anthropic’s tools.30 Hours later, Claude was used in Operation Epic Fury.31 The Pentagon had declared Anthropic a risk, even as it continued to rely on its technology.

Anthropic’s carve-outs were contractual terms because they did not exist in law. There is no US statute prohibiting autonomous weapons and no federal legislation governing AI-enabled mass-surveillance. For some policymakers, that ambiguity may represent a strategic advantage in an emerging AI arms race. “Any lawful use” means what the law has not yet prohibited, and with innovative technologies, has not yet considered.

This was the basis of Anthropic CEO Dario Amodei’s objection.

“Congress is not the fastest moving body in the world … for right now, we are the ones who see this technology on the front lines.”32

Anthropic was drawing boundaries that Congress had not. Congressman Sam Liccardo put it more bluntly:

“There is only one problem with the Pentagon’s approach: there is no law. The law is years behind the technology.”33

The representative introduced an amendment to the Defense Production Act to prevent the Pentagon from retaliating against companies with safety guardrails.34 Anthropic filed two federal lawsuits accusing the Trump administration of retaliating against the company for its stance on AI safety.35 The first binding precedent on AI safety restrictions in military use may now come from the judiciary, not the legislature. Two institutions, two branches of government, both arriving after the fact.

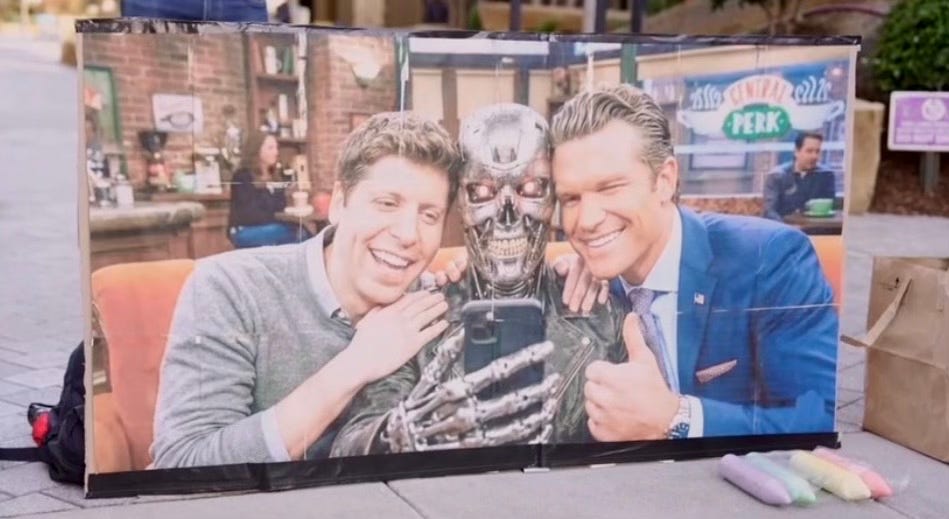

The court of public opinion reached its verdict before any institution.

Within hours, OpenAI CEO Sam Altman announced that his company had entered into an agreement with the Pentagon to deploy ChatGPT on classified networks.36 The timing was telling. The backlash was immediate. By the weekend, Claude surged past ChatGPT to become the most downloaded free app on Apple’s App Store.37 More than 4 million people joined the QuitGPT boycott.38 Chalk graffiti appeared on the pavement outside OpenAI’s San Francisco office.39 Hundreds of OpenAI and Google employees signed a joint open letter supporting Anthropic’s refusal and urging limits on the Pentagon’s AI use.40 In the absence of legislation, accountability did not come from Congress, nor the courts, but from public pressure.

Altman conceded on X:

“We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy.”41

The contract was subsequently amended to include language prohibiting the use of OpenAI’s systems for mass domestic surveillance, direct autonomous weapons, and social credit systems.42 Even so, the inclusion of the phrase “all lawful purposes, consistent with applicable law, [and] operational requirements”43 raises questions around whether those explicit red lines are meaningfully enforceable.

Yet one jurisdiction tried to get ahead of the curve.

A month before the Pentagon-Anthropic clash, Singapore unveiled the world’s first governance framework for agentic AI at the World Economic Forum in Davos.44 The framework addresses AI systems capable of autonomous reasoning, planning, and action. Systems that go beyond today’s generative models: initiating and executing tasks with minimal human input. It calls for organisations to assess risks before deployment, assign clear human accountability throughout processes, implement technical safeguards, and ensure transparency with users.45 However, the framework is non-binding and carries no force of statute. Still, it attempts something no other jurisdiction has managed: anticipating the governance of a technology before it scales and embeds.

The technology evolves; the sequence doesn’t.

In the UK, the Online Safety Act was built for a threat that had already changed shape. In the US, the government reached for a statute from the industrial era to compel a Silicon Valley company, while the most forward-looking response remained a non-binding framework. In the absence of law, the most effective checks came from an online petition and chalk on the pavement. If corporate policies are more restrictive than regulation, if existing systems of governance are not capable of keeping pace with innovation, then who governs the machine?

For now, no one in particular and everyone by accident.

Laura Cress, ‘Woman felt “dehumanised” after Musk’s Grok AI used to digitally remove her clothes’ BBC News (2 January 2026) <https://www.bbc.com/news/articles/c98p1r4e6m8o> accessed 7 March 2026.

xAI, ‘Grok Image Generation Release’ (xAI, 9 December 2024) <https://x.ai/news/grok-image-generation-release> accessed 9 March 2026.

Dr Paul Bouchaud, Grok Unleashed (AI Forensics, 5 January 2026) <https://aiforensics.org/work/grok-unleashed> accessed 7 March 2026.

Dr Federica Fedorczyk, ‘Expert Comment: Chatbot-driven sexual abuse? The Grok case is just the tip of the iceberg’ University of Oxford (14 January 2026) <https://www.ox.ac.uk/news/2026-01-14-expert-comment-chatbot-driven-sexual-abuse-grok-case-just-tip-iceberg> accessed 7 March 2026.

Ofcom, ‘Ofcom launches investigation into X over Grok sexualised imagery’ (Ofcom, 12 January 2026) <https://www.ofcom.org.uk/online-safety/illegal-and-harmful-content/ofcom-launches-investigation-into-x-over-grok-sexualised-imagery> accessed 7 March 2026.

Information Commissioner’s Office, ‘ICO announces investigation into Grok’ (Information Commissioner’s Office, February 2026) <https://ico.org.uk/about-the-ico/media-centre/news-and-blogs/2026/02/ico-announces-investigation-into-grok/> accessed 7 March 2026.

European Commission, ‘Commission investigates Grok and X’s recommender systems under the Digital Services Act’ (Shaping Europe’s digital future, 26 January 2026) <https://digital-strategy.ec.europa.eu/en/news/commission-investigates-grok-and-xs-recommender-systems-under-digital-services-act> accessed 7 March 2026.

Bloomberg News, ‘Malaysia, France, India Hit Out at X for “Offensive” Grok Images’ Bloomberg (4 January 2026) <https://www.bloomberg.com/news/articles/2026-01-04/malaysia-france-india-hit-out-at-x-for-offensive-grok-images?embedded-checkout=true> accessed 7 March 2026.

Akash Sriram and Anhata Rooprai, ‘Musk’s AI bot Grok limits some image generation on X after backlash’ Reuters (9 January 2026) <https://www.reuters.com/sustainability/boards-policy-regulation/musks-ai-bot-grok-limits-image-generation-x-paid-users-after-backlash-2026-01-09/> accessed 7 March 2026.

Online Safety Act 2023 <https://www.legislation.gov.uk/ukpga/2023/50/contents>.

Ofcom, ‘Investigation into X and scope of the Online Safety Act’ (Ofcom, 3 February 2026) <https://www.ofcom.org.uk/online-safety/illegal-and-harmful-content/investigation-into-x-and-scope-of-the-online-safety-act> accessed 7 March 2026.

Press Release ‘PM: “No platform gets a free pass”: Government takes action to keep children safe online’ (GOV.UK, 15 February 2026) <https://www.gov.uk/government/news/pm-no-platform-gets-a-free-pass-government-takes-action-to-keep-children-safe-online> accessed 7 March 2026.

Press Release ‘Tech companies must go “above and beyond” to protect women and girls from online abuse or face further action’ (GOV.UK, 10 March 2026) <https://www.gov.uk/government/news/tech-companies-must-go-above-and-beyond-to-protect-women-and-girls-from-online-abuse-or-face-further-action> accessed 10 March 2026.

Crime and Policing Bill 2024–26 <https://bills.parliament.uk/bills/3938> accessed 10 March 2026.

Julian Barnes, Tyler Pager and Eric Schmitt, ‘Inside ‘Operation Absolute Resolve,’ the U.S. Effort to Capture Maduro’ New York Times (3 January 2026) <https://www.nytimes.com/2026/01/03/us/politics/trump-capture-maduro-venezuela.html> accessed 8 March 2026.

Ramkumar Amrith and Keach Hagey, ‘Pentagon Used Anthropic’s Claude in Maduro Venezuela Raid’ Wall Street Journal (13 February 2026) <https://www.wsj.com/politics/national-security/pentagon-used-anthropics-claude-in-maduro-venezuela-raid-583aff17> accessed 8 March 2026.

Carlos Méndez and Juby Babu, ‘US used Anthropic’s Claude during the Venezuela raid, WSJ reports’ Reuters (15 February 2026) <https://www.reuters.com/world/americas/us-used-anthropics-claude-during-the-venezuela-raid-wsj-reports-2026-02-13/> accessed 8 March 2026.

Thomas Novelly, ‘First 24 hours of Trump’s war on Iran, by the numbers’ Defense One (1 March 2026) <https://www.defenseone.com/threats/2026/03/first-24-hours-trumps-war-iran-numbers/411789/> accessed 8 March 2026.

Tara Copp, Elizabeth Dwoskin and Ian Duncan, ‘Anthropic’s AI tool Claude central to U.S. campaign in Iran, amid a bitter feud’ Washington Post (4 March 2026) <https://www.washingtonpost.com/technology/2026/03/04/anthropic-ai-iran-campaign/> accessed 8 March 2026.

Emelia Probasco, Building the Tech Coalition: How Project Maven and the U.S. 18th Airborne Corps Operationalized Software and Artificial Intelligence for the Department of Defense (Center for Security and Emerging Technology, Georgetown University 2024) <https://cset.georgetown.edu/wp-content/uploads/CSET-Building-the-Tech-Coalition-1.pdf> accessed 9 March 2026.

Christian Brose, The Kill Chain: Defending America in the Future of High-Tech Warfare (Hachette Books 2020).

Sydney Freedberg Jr, ‘New contract expands Maven AI’s users “from hundreds to thousands” worldwide, Palantir says’ Breaking Defense (30 May 2024) <https://breakingdefense.com/2024/05/new-contract-expands-maven-ais-users-from-hundreds-to-thousands-worldwide-palantir-says/> accessed 9 March 2026.

Sydney Freedberg Jr, ‘NATO picks Palantir’s Maven AI for military planning, amid trans-Atlantic tension’ Breaking Defense (14 April 2025) <https://breakingdefense.com/2025/04/nato-picks-palantirs-maven-ai-for-military-planning-amid-trans-atlantic-tension/> accessed 9 March 2026.

Executive Order 14347 authorised the use of “Department of War” and “Secretary of War” as secondary titles within the executive branch. The statutory names remain unchanged as only Congress can formally rename a federal department. This piece uses the statutory titles throughout.

J Ryan Frazee, John Prairie and Adam Hickey, ‘Pentagon Designates Anthropic a Supply Chain Risk — What Government Contractors Need to Know’ Mayer Brown (2 March 2026) <https://www.mayerbrown.com/en/insights/publications/2026/03/pentagon-designates-anthropic-a-supply-chain-risk-what-government-contractors-need-to-know> accessed 8 March 2026.

Ibid.

Secretary of War, ‘Artificial Intelligence Strategy for the Department of War: Accelerating America’s Military AI Dominance’ (Department of War, 9 January 2026) <https://media.defense.gov/2026/Jan/12/2003855671/-1/-1/0/ARTIFICIAL-INTELLIGENCE-STRATEGY-FOR-THE-DEPARTMENT-OF-WAR.PDF> accessed 8 March 2026.

Alan Rozenshtein, ‘What the Defense Production Act Can and Can’t Do to Anthropic’ Lawfare (28 February 2026) <https://www.lawfaremedia.org/article/what-the-defense-production-act-can-and-can-t-do-to-anthropic> accessed 8 March 2026.

Brendan Bordelon, ‘Pentagon tells Anthropic it has designated the company a supply chain risk’ Politico (5 March 2026) <https://www.politico.com/news/2026/03/05/pentagon-tells-anthropic-it-has-designated-the-company-a-supply-chain-risk-00814758> accessed 8 March 2026.

Donald J Trump (@realDonaldTrump), post on Truth Social (28 February 2026) <https://truthsocial.com/@realDonaldTrump/posts/116144552969293195> accessed 10 March 2026.

Copp, Dwoskin and Duncan, ‘Anthropic’s AI tool Claude central to U.S. campaign in Iran, amid a bitter feud’.

CBS News, ‘Full interview: Anthropic CEO responds to Trump order, Pentagon clash’ (YouTube, 9 March 2026) accessed 2 March 2026.

Press Release, ‘Rep. Sam Liccardo Forces Vote on Pentagon’s Misguided AI Posture’ (House of Representatives, 4 March 2026) <https://liccardo.house.gov/media/press-releases/rep-sam-liccardo-forces-vote-pentagons-misguided-ai-posture> accessed 8 March 2026.

Ibid.

Brendan Bordelon and Kyle Cheney, ‘Anthropic sues Trump admin over supply chain risk label’ Politico (9 March 2026) <https://www.politico.com/news/2026/03/09/anthropic-sues-trump-admin-over-supply-chain-risk-label-00818716> accessed 10 March 2026.

Brent Griffiths and Madison Hoff, ‘Chart shows Claude’s dethroning of ChatGPT in app downloads race’ (6 March 2026) Business Insider <https://www.businessinsider.com/claude-number-1-app-stores-chatgpt-apple-google-ai-2026-3> accessed 10 March 2026.

QuitGPT, ‘ChatGPT takes Trump’s killer robot deal’ (2026) <https://quitgpt.org> accessed 10 March 2026.

Joe Barros, ‘March 1 Chalk Wars: It’s OpenAI vs. Anthropic on San Francisco’s sidewalks’ Mission Local (1 March 2026) <https://missionlocal.org/2026/03/sf-openai-anthropic-ai-pentagon-deal-trump-sidewalk-chalk/> accessed 10 March 2026.

Siladitya Ray, ‘OpenAI And Google Staffers Back Anthropic In Open Letter And Call For Limits On Pentagon AI Use’ Forbes (27 February 2026) <https://www.forbes.com/sites/siladityaray/2026/02/27/openai-and-google-staffers-back-anthropic-in-open-letter-and-call-for-limits-on-pentagon-ai-use/> accessed 9 March 2026.

OpenAI, ‘Our agreement with the Department of War’ (OpenAI, 2 March 2026) <https://openai.com/index/our-agreement-with-the-department-of-war/> accessed 9 March 2026.

Ibid.

Ministry of Digital Development and Information, ‘Singapore launches new Model AI Governance Framework for Agentic AI’ (MDDI, 22 January 2026) <https://www.mddi.gov.sg/newsroom/singapore-launches-new-model-ai-governance-framework-for-agentic-ai--/> accessed 10 March 2026.

Ibid.